AI prompt engineering is now part of how people learn, teach, research, and work. Yet most organizations still approach it with tools that were not built for learning. A learner writes a prompt, gets an answer, perhaps tries again, and moves on. The experience feels fast, but the learning is shallow. It rarely helps the learner understand why one prompt worked better than another, how different AI providers responded to the same instruction, or what specific improvement should be made in the next iteration.

That is the gap AI Response Lab is designed to address. This Lab Tool is not only a place to run prompts. It is a structured learning environment for prompt engineering. It gives learners a disciplined way to compare responses across providers, review the quality of their own prompts, reflect on what changed, and build a visible improvement journey over time. For instructors and institutional decision-makers, that distinction matters. The value of a lab tool is not just that it can connect to AI models. The value is that it can help people become better at using them.

A learner does not need another prompt box

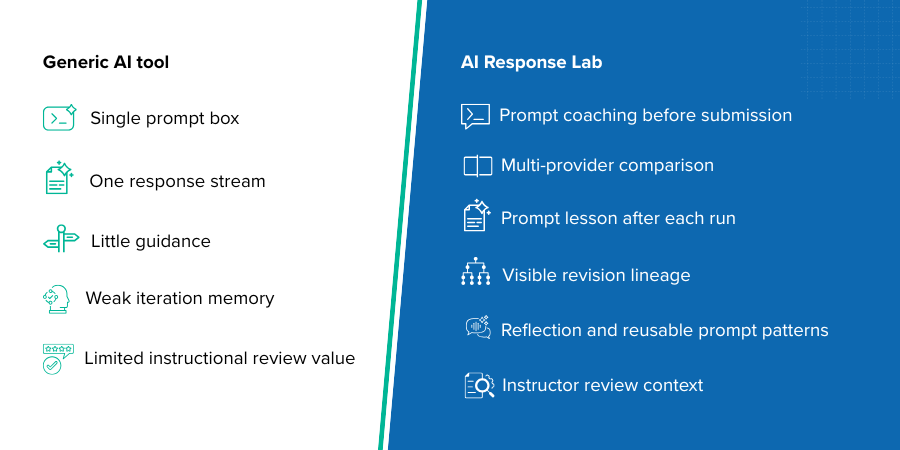

Many AI tools start and end with a simple input field. That may be enough for casual exploration, but it is not enough for serious education or workforce training. When a learner is developing prompt engineering skill, the challenge is not only generating output. The challenge is learning how to frame a task clearly, define the audience, specify the output shape, set constraints, and judge the quality of the response in a repeatable way.

AI Response Lab treats that as the core learning problem. Its learner-facing design is organized around the idea that prompt writing should be coached, comparison should be intentional, and every run should teach something. Instead of encouraging learners to chase whichever answer looks longest or most confident, the lab nudges them to compare structure, reasoning quality, usefulness, and fit for purpose. It changes the educational value of the activity. A prompt run becomes more than a one-time experiment. It becomes an observable exercise in judgment.

The lab teaches learners before they submit

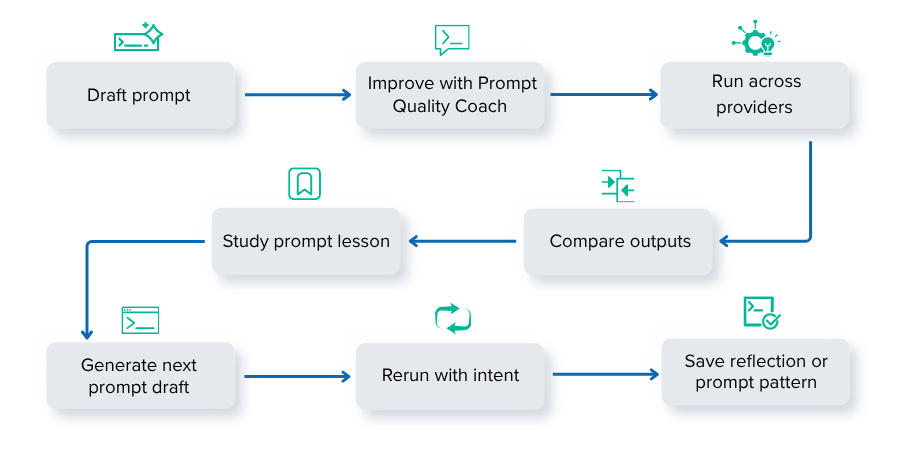

One of the strongest learner-facing aspects of AI Response Lab is that the guidance begins before the prompt is run. The Prompt Studio experience helps the learner think in terms of prompt construction rather than just task entry. It reinforces that a good comparison prompt should clearly define the job, the intended audience, the desired response format, and the success criteria.

That matters because many prompt engineering failures are preventable. Learners often submit vague requests, omit constraints, or forget to specify what a useful answer should look like. In a conventional tool, they only discover the problem after receiving weak outputs. AI Response Lab reduces that waste by offering a Prompt Quality Coach that surfaces readiness signals and improvement hints before submission. In practical terms, this means the learner is being taught how to strengthen the prompt at the moment the lesson is most relevant.

For institutions, this is important because it makes the tool more teachable at scale. Instructors do not have to manually repeat the same basic prompt-design advice in every session. The product itself reinforces the discipline.

Comparison is treated as a learning method, not a novelty

Cross-provider comparison is often marketed as a convenience feature. In the AI Response Lab, it is positioned as a learning method. The learner writes a single clean prompt and sends the same task to selected providers. It creates a fairer basis for evaluating the differences in reasoning, structure, tone, and usefulness.

That is a much more serious use of comparison than simply asking, "Which model is best?" In a classroom, a university department, or a corporate training environment, what matters is whether learners can interpret differences responsibly. Why did one provider produce a clearer structure? Why did another drift from the requested format? Which answer is more actionable, and which one only appears more impressive because it is longer?

AI Response Lab supports that kind of reading by framing the comparison as evidence. The learner is not only choosing a winner. The learner is learning how prompt design influences model behavior.

The feedback loop is built into the run itself

Most prompt tools leave learners alone after the response arrives. AI Response Lab continues the lesson on the manage page. Each run can surface a prompt-engineering lesson that explains what the prompt did well, what should be sharpened next, and what experiment the learner should try in the next version. It is a substantial difference in educational design.

The lab does not assume that the learner will know how to improve from raw output alone. It translates the run into an explicit learning moment. If the prompt had enough context but weak output constraints, that can be surfaced. If the prompt produced usable comparisons but needs clearer success criteria, that can be surfaced as well. The tool then generates a next-prompt draft so the learner can move from observation to action without losing momentum.

This feature is especially valuable for prompt engineering because improvement is iterative by nature. Strong prompt writers do not usually arrive at the best version on the first try. They refine. They test. They compare. AI Response Lab supports real workflows rather than forcing users to start from scratch each time.

Learners can see an improvement journey, not a pile of disconnected runs

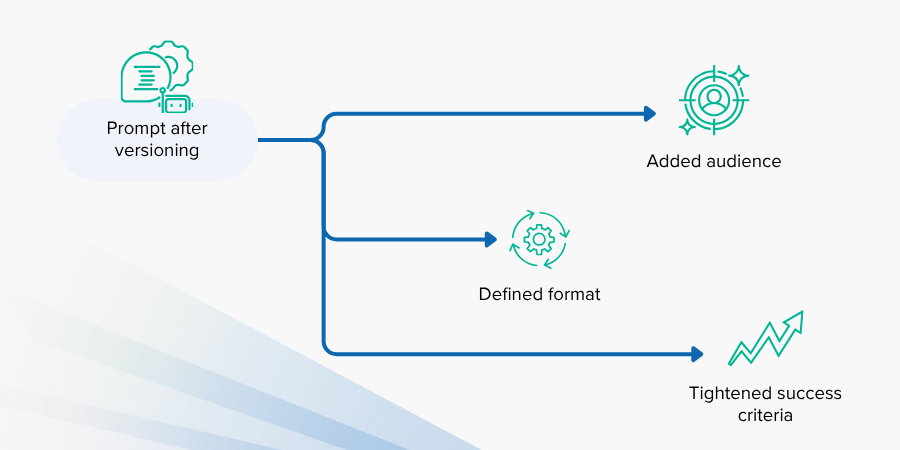

An important weakness in many AI environments is the lack of visible lineage. Learners run prompts repeatedly, but the iterations are disconnected. There is no clear record of what changed, why it changed, or whether the change improved the outcome.

AI Response Lab addresses this with run lineage and edit-as-new-run workflows. A learner can preserve the earlier run, create a new draft from it, and follow the revision chain over time. It has obvious instructional value. It turns prompt engineering into a documented process rather than a series of isolated attempts.

For educators and training leads, this makes learner progress easier to review. It becomes possible to see whether a participant is improving in how they frame tasks, define structure, and use evidence from previous comparisons. That is a stronger basis for assessment than looking at one final answer in isolation.

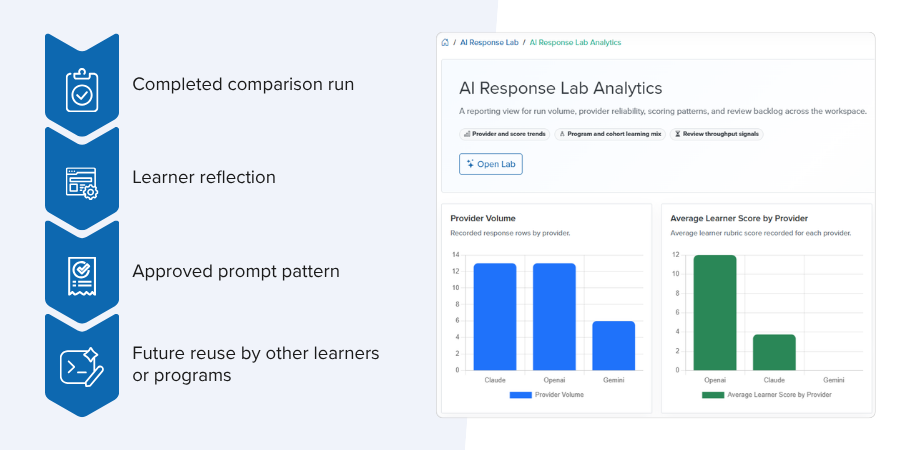

Reflection and pattern building make the learning reusable

Prompt engineering becomes more durable when learners pause to articulate what they have learned. AI Response Lab includes reflection workflows that help users capture what worked, what failed, and what they would change next. It is not reflective writing for its own sake. It is a way of converting experimentation into reusable knowledge.

The lab also supports prompt patterns and approved pattern libraries. When a strong prompt emerges from a successful comparison, it can become something others reuse rather than rediscover from scratch. Over time, this creates institutional memory. A department, program, or organization is no longer dependent on scattered personal know-how. It starts building a shared prompt practice.

That is one of the strongest reasons to choose AI Response Lab over a generic AI interface. Generic tools generate outputs. AI Response Lab helps generate capability.

It is useful for learners, but it is also accountable for instructors

Adoption decisions in educational institutions, university departments, and corporate organizations are rarely made solely on the basis of learner experience. Decision-makers also need reviewability, structure, and operational control. AI Response Lab is stronger here because it does not separate learner experimentation from instructional oversight.

Runs can carry program context. Histories can be reopened and reviewed. Instructors can score outputs, compare providers, and use the same run as the basis for guided feedback. The product is designed so that learner work can move into instructor review without losing the original prompt, context, or comparison record.

It gives the lab a practical advantage in real deployments. A tool becomes much easier to adopt when it supports both sides of the learning relationship: the learner who needs guided experimentation and the instructor who needs a review surface that is fast, legible, and pedagogically useful.

Why choose AI Response Lab as the lab tool for prompt engineering

The answer is not simply that it supports multiple AI providers. Many products can claim that. The stronger answer is that AI Response Lab is built around the actual skill of prompt engineering.

It helps learners design stronger prompts before submission. It lets them compare the same task across providers in a disciplined way. It teaches them how to read the results, not just how to generate them. It turns each run into a lesson, each revision into a visible improvement step, and each successful pattern into reusable institutional knowledge.

For an instructor, that means less time spent correcting avoidable prompt mistakes and more time spent teaching judgment. For a department or organization, it means prompt engineering can be delivered as a structured learning practice instead of an informal trial-and-error habit. For Batoi Academy, it positions AI Response Lab as more than a utility. It becomes a serious academic and professional learning tool.

In a period when AI fluency is becoming essential, that distinction matters. The best lab tool is not the one that merely produces responses. It is the one that helps people learn how to ask better questions, evaluate answers more intelligently, and improve with intention. AI Response Lab is compelling because it is built for exactly that purpose.

Batoi Corporate Office

Batoi Corporate Office